Federal Officials Should Disclose Vulnerabilities for Security’s Sake

When the government finds a loophole, it faces a difficult choice: Should it disclose the vulnerability so that it can be patched and prevent criminals from exploiting the same flaw, or should it keep the loophole secret and use it to investigate crimes and gather intelligence?

To decide, the government weighs harm against harm in an exercise known as the Vulnerability Equities Process (VEP). While the precise details are classified, the Obama administration provided an overview of the criteria to determine whether to disclose a newfound vulnerability, commonly known as zero day.

Those factors include:

- The extent to which the vulnerable system is used in core internet, critical infrastructure and the U.S. economy

- The risk in leaving it unpatched

- How badly the government needs the intelligence it might gain

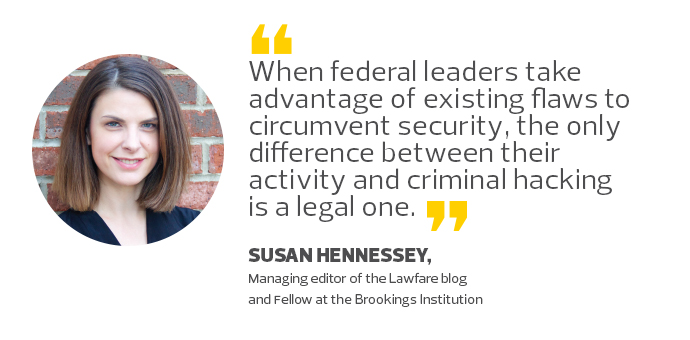

Generally, government serves its goals best by disclosing vulnerabilities. When federal leaders take advantage of existing flaws to circumvent computer security, the only difference between their activity and criminal hacking is a legal one. As a technical matter, the two are indistinguishable.

However, any vulnerability the government exploits could be used by criminals as well. When the government sits on a newly discovered vulnerability, it exposes innocent users to increased risk.

For that reason, the White House has designed the process with a strong bias toward disclosure. (The National Security Agency notes that the government discloses 91 percent of zero-day vulnerabilities submitted through the formal process.)

Why Public Disclosure of Threats Is Vital

Critics often point to specific instances where they believe the government unjustifiably failed to disclose flaws in widely utilized systems. They then use those examples as evidence of a broader failure of VEP.

In addition, critics call for further transparency to ensure the public is adequately informed. Those are fair points, and thoughtful proposals for reforming the reporting process exist.

But federal officials and observers have overlooked what occurs in the overwhelming majority of cases where the government discloses a threat. The primary reason for announcing a zero-day vulnerability is so the government can patch the flaw and remove the risk. Yet, in a speech in October, Curtis Dukes, then the NSA’s director of information assurance, said none of the high-profile intrusions, including those against government systems the past two years, are the result of zero-day vulnerabilities. Instead, attackers took advantage of poor cyberhygiene, such as unpatched systems, and used known techniques such as spear phishing.

The Best Path Forward

VEP reform should not focus on insufficient disclosures but instead center on insufficient responses to known vulnerabilities.

Because the government does not disclose which vulnerability reports are a result of the VEP, it is impossible to publicly track the rate at which patches are deployed. Still, instructive examples can be found elsewhere.

The U.K. Government Communications Headquarters publicizes when it shares vulnerabilities with industry, and in spring 2016, it reported two vulnerabilities in the Firefox browser to Mozilla.

If a formalized VEP hopes to better reflect the cybersecurity goals of government, then reforms should require industry and agencies to take steps to address vulnerabilities they learn about through the process.

In other words, vulnerability equities should not require disclosure for the sake of disclosure, but disclosure for the sake of security.