5 Tips to Effectively Measure Security

Is your security program effective? Can you prove it in a way that senior IT and agency leaders can understand? An effective security metrics program can provide this type of evidence and justify the significant investments in information security made by your organization.

However, building an effective program requires careful planning. It’s easy to cobble together available data into some charts and graphs, but it’s much more difficult to paint a meaningful picture of the contributions that information security makes to your organization’s bottom line.

Characteristics of Good Security Metrics

As you begin to develop your metrics program, there are some ground rules to keep in mind. Following these five principles will help you focus on the development of effective measures of success:

1. Metrics should demonstrate the effectiveness of your security program

These measures might evaluate the direct actions of security staff or the downstream effects on other technology professionals and end users. The bottom line is that they should clearly demonstrate, in the language of the agency, the return on security investment.

2. Metrics should be geared to the target audience

Present different metrics to the technical professionals who manage the security function, IT management and agency leaders. For example, while IT leaders are certainly interested in the types of vulnerabilities detected during a scan, the typical agency executive would not know what to do with this type of information. Be sure to know your audience and create different tiers of metrics for different audiences when appropriate.

3. Metrics should be quantitative

If you can’t find a good way to measure the concept you’re trying to convey, it’s probably not a good candidate for your metrics program. I’ve seen a number of organizations attempt to build metrics using qualitative measures (such as “high risk,” “medium risk” and “low risk”) or pseudo-quantitative measures (such as rating risks on a 1 to 10 scale), and these simply don’t work well. They lead to discussions about the subjectivity of the measure and put the focus on the rating rather than the results.

4. Metrics should be designed around readily available data

This should go without saying, but before you invest time in designing a metric, be sure that you will be able to get the data to support it. If supporting systems aren’t capable of providing a data element, either redesign those systems, choose an alternative data point or source, or abandon the idea.

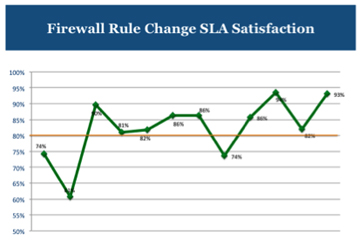

5. Metrics presentations should include targets

You should provide quantitative targets for your metrics that help your audience to answer the question, “How are we doing?” For example, the chart shown above includes an orange target line indicating that the desired performance level for firewall rule change requests is meeting the service-level agreement for 80 percent of requests. Including this target visually allows managers to quickly assess the status of this metric and look at trends over time.

Following these five tips will help you create a security metrics program that stands the test of time.

Cover the Bases: Security Metrics Categories

As you think about the types of information to include in your security metrics initiative, consider three different categories: security operations metrics, IT operations metrics and user behavior metrics. Each of these three categories provides a different view of the effectiveness of your security program.

Security operations metrics measure the direct impact of an information security team. For example, you might include the percentage of mobile devices in your organization that are encrypted or the percentage of firewall rule requests satisfied within the terms of your service-level agreement. The key questions to ask as you explore metrics in this category are, “What are the essential activities that our security team performs, and how can we best assess their effectiveness?”

However, you can’t get a complete picture of a security program without looking at the downstream effects on technology professionals and end users. Include a healthy mix of these metrics in your program as well. For example, you might track the responsiveness of system administrators to security vulnerabilities by monitoring the number of open critical vulnerabilities and the typical amount of time required to resolve a vulnerability.

Similarly, you might also track the number of user accounts that are compromised over time. While security professionals might complain that these are indirect measures, that’s precisely the point. One of the critical responsibilities of a security program is building a culture of security within the organization, and these measures evaluate the effectiveness of that culture.

Things to Avoid

As you work to create metrics, keep in mind a few pitfalls that other organizations have encountered. You’d be best to steer clear of three particular types of metrics:

- “My device is cool” metrics: Security professionals love to show off their devices, but measures like “number of intrusion prevention system alerts” and “number of blocked port scans” aren’t really very helpful. They lack the “so what?” that leaders need to gauge effectiveness.

- Large meaningless numbers: IT professionals are notorious for bragging about the massive numbers associated with their services. Unfortunately, “number of viruses blocked by our spam filter” might sound impressive, but it’s not really helpful. If they were blocked, who cares? You’ll be much better off tracking the number of actual virus infections.

- Gratuitous division: If you’re going to divide anything, that’s a warning sign that you need to rethink your metric. Division often creates confusing metrics, often labeled “indexes,” that only have meaning relative to themselves. While it’s sometimes OK to use ratios, such as “number of account compromises per user,” make sure that you can easily explain the metric and how your activity can change it.

Building a robust, effective metrics program is a great way to demonstrate the value that your security activities bring to the organization. Adapting language to the vocabulary of your agency and designing metrics that directly measure critical activities can help you build a program that is respected both in the IT trenches and by agency executives.