3 Tips to Ensure Smart Physical Security for Your Agency's Data Centers

Data centers are a clear target in cyberwarfare, but not all the threats they face are based in the cyber world. Data center managers must also take physical security into account. In addition to cybersecurity measures, agencies need to maintain data center operations, keep unwelcome visitors out of their facilities and protect IT assets.

Federal IT managers should follow several best practices to maintain physical security and keep their data centers up and running.

1. Focus on the Biggest Threat: Power

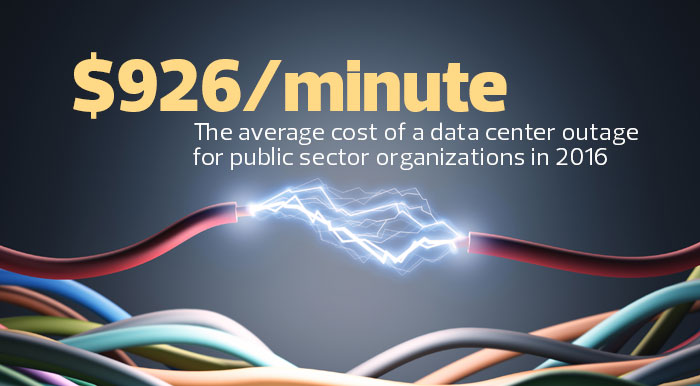

Problems with power may occur due to unforeseeable system failures, acts of God or deliberate sabotage. Regardless of the cause, the lack of good, clean power is responsible for 31 percent of unplanned data center outages, according to a January 2016 Ponemon Institute study. Denial-of-service attacks may make the headlines, but agencies are far more likely to have an outage from a power problem than from a cyberattacker.

Efforts to address issues involving data center power are among the best investments agencies can make in physical security. The Uptime Institute, a global data center consulting firm, suggests that every IT manager strive to achieve Tier III: “Concurrently Maintainable” status in the data center. At Tier III, every piece of equipment is dual-powered, with a fully redundant power distribution system.

Tier III data centers can lose an uninterruptible power supply (UPS) or generator power and keep on running. More important, having a Tier III design lets data center operators perform regular maintenance on power equipment and regularly test capacity and failover without threatening actual operations. Many data center failures that can be traced to UPSs and generators are simply the result of unmaintained equipment, so testing and maintaining power hardware is an excellent way to keep things running smoothly.

2. Have High Standards for Access Control

Another significant cause of data center downtime is human error. Agencies cannot do much about a staff member typing “shutdown” in the wrong window, but they can make it less likely someone will unplug the wrong device in a rack full of gear.

A good first step is to focus on cleanliness and documentation. Data centers should not have uninstalled gear, cardboard boxes, spare cables or unlabeled devices in them — they’re all invitations to human error or insider attack.

SOURCE: "Cost of Data Center Outages," Ponemon Institute, January 2016. Photo credit: ktsimage/ThinkStock

Nothing should go into, or come out of, a data center without a paper trail (real or virtual); and nothing should go into a rack without being properly labeled and documented in an online system.

In fact, just getting into the room should be a carefully managed process. If someone cannot touch a piece of hardware, it’s far less likely to break. An access system that relies on identification cards and biometrics is a valuable tool to control access. Data center visitors should be required to log the reason for every entrance, and IT managers should make frequent walkthroughs to ensure that standards are being adhered to.

For agencies that operate preproduction, test and development hardware along with production equipment, these systems should be separated into different rooms with different standards for access. If the rooms cannot be physically separated, internal barriers such as wire cages can be used to separate production systems from other equipment, with clear signage that identifies equipment that is particularly sensitive.

Agencies should also separate building networking infrastructure (which requires frequent changes) from data center network infrastructure.

3. Make Smart Choices on Data Center Locations

Agencies should avoid locating data centers in areas commonly hit by natural disasters such as hurricanes, tornadoes, floods and earthquakes.

They should also pay attention to their neighbors. Airports, railroads, parking garages and highways are magnets for problems such as chemical spills and fires. Buildings that house activities such as hazardous material manufacturing or research, or pipelines of any kind, may end up blocking access during emergencies. Further, locations in proximity to facilities such as military operations, medical facilities, embassies, federal buildings or other high-profile targets may be susceptible to collateral damage and, therefore, downtime.

Other building concerns, such as adequate space, heating, air conditioning, power and telecommunications, have to be balanced with physical security. But trying to link human requirements and data center requirements is a mistake. Data centers don’t need to be near people, and they don’t need to be nice places for people to work. Instead, they need to be near good, cheap power and high-speed telecommunications services. Staffing in data centers should be kept to an absolute minimum.

Agencies can also see security benefits by colocating their data centers within a telecommunications or hosting facility. This allows the agency to piggyback on the facility’s security and engineering staff.

Sites should also be selected and built out to resist security problems. The more windows a building has, the more points of entry an intruder may be able to exploit. Ugly steel doors and solid concrete walls are an effective barrier against weather problems and human intrusion.

Actual attacks are uncommon, but vandalism and theft are not. Agencies that bury their power and telecommunications cables improve their protection against both foul play and weather. Targets that are valuable to thieves, such as air conditioning systems, copper piping and high-current power wiring should be carefully secured. Thieves looking for recyclable materials have ruined cooling systems worth $50,000 by stealing $50 worth of copper piping.