The History of Federal Data Centers [#Infographic]

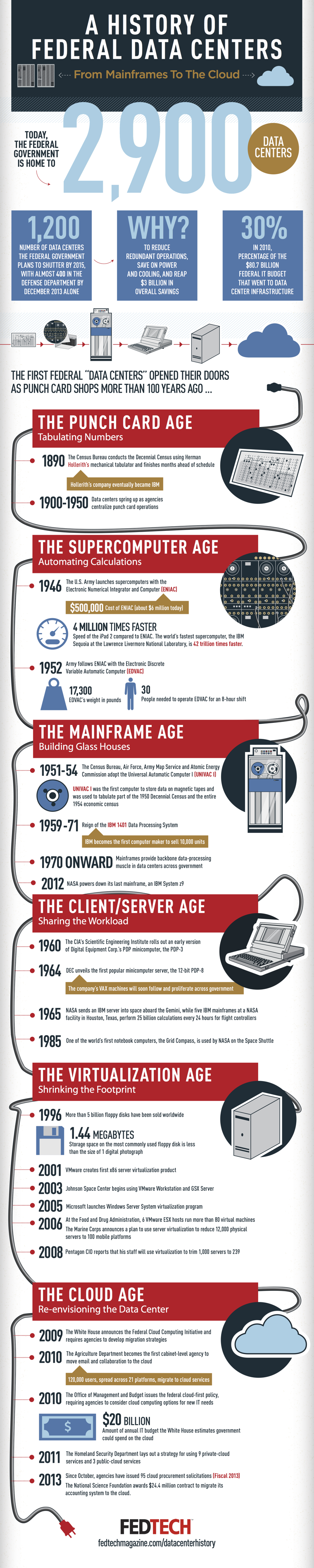

In one form or another, data centers have been at the core of the federal government’s technology infrastructure for more than 100 years. The government’s interest in computers was sparked years before the first machines were ready for widespread use, and data centers are now vital to most agencies’ daily operations.

In the early days of computing, the mechanical devices performed simple mathematical equations, albeit considerably faster than humans. In 1890, the Census Bureau used Herman Hollerith’s mechanical tabulator to conduct the decennial census. It was finished months ahead of schedule, changing forever the government landscape.

It took decades to move from punch-card machines to supercomputers. The military understood the value of computers early on, and the Army was a pioneer in developing the first programmable, digital computer, known as ENIAC. At the time, it cost $500,000, equivalent to about $6 million today. EDVAC, the ENIAC successor, required 30 people to operate and weighed nearly nine tons.

NASA was another agency that recognized the incredible power of computers and data centers in the early years. By the mid-1960s, NASA had already sent a server into space; by 1985, the agency had sent a notebook computer, the Grid Compass, on a Space Shuttle mission.

In the early 1990s, the general public and a large base of federal employees began to discover the advantages of working with computers. Personal computers increased the need for mainframes and later for server farms. The influx eventually led to a bloated and expensive infrastructure within the government. Data centers are notoriously expensive to run and maintain, and the swell prompted the Office of Personnel Management to create the Federal Data Center Consolidation Initiative in 2010. The project was a move to optimize the use of data centers, whose utility is growing rapidly as cloud computing becomes the norm.

The history of federal data centers is far from complete. Here is a look at how they have evolved over the past 120 years.