NOAA Shares Data in the Cloud

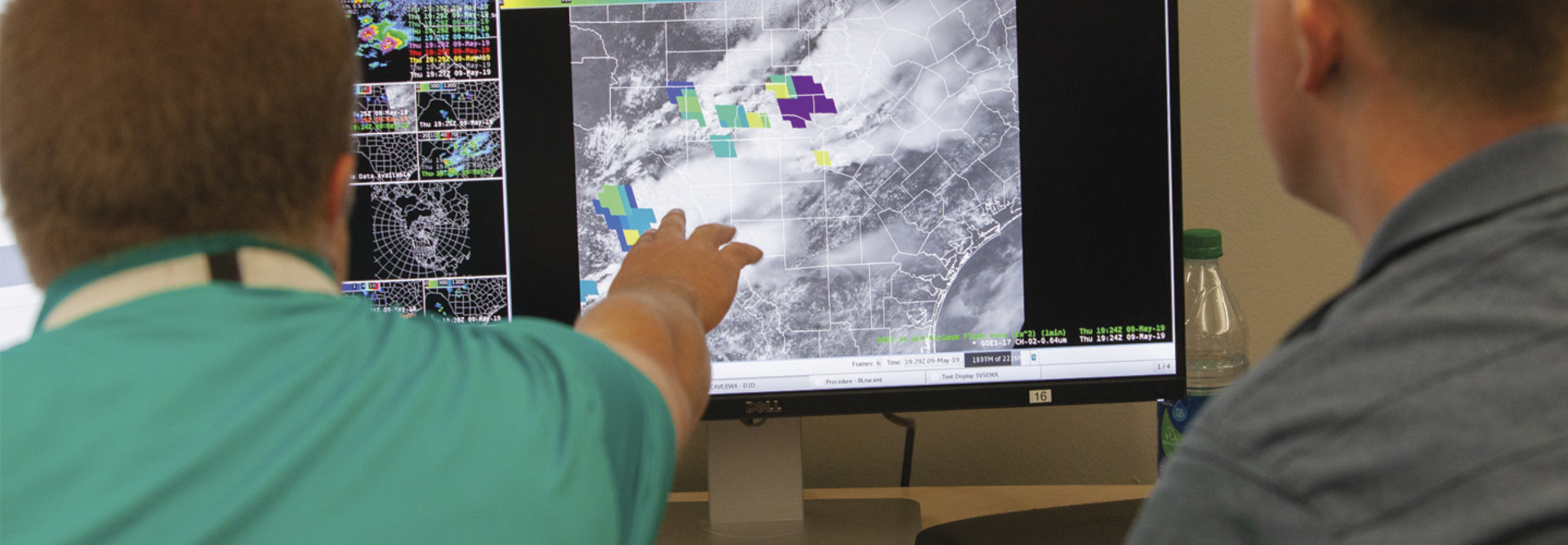

In December, NOAA announced separate partnerships with some of the big cloud providers such as Google Cloud and Microsoft. These multiyear agreements provide public access to the agency’s vast collection of environmental data sets by way of the cloud.

Under their contracts, the cloud providers can charge a fee for compute or other data processing services, but they must provide free access to the data itself. This is no small feat, as the agency produces tens of terabytes of data every day from a variety of sources including satellites, radar, ships, weather models and other sources.

“Cloud-based storage and processing is the future,” Neil Jacobs, acting NOAA administrator, said in a December press release. “Not only will this improved accessibility enhance NOAA’s core mission to protect life and property, but it will also open up new and exciting areas of research at universities and significant market opportunities for the private sector.”

MORE FROM FEDTECH: Find out about NOAA’s cloud and AI strategies.

Big Data Project Drives Access and Innovation

The effort to share this data comes about thanks to NOAA’s Big Data Project. The project seeks to connect the agency’s data, through cloud industry partners, with the entrepreneurial energy of the U.S. economy, driving both open access and economic innovation at the same time.

The Big Data Project is one element of the Commerce Department’s 2018-2022 strategic plan, supporting the department’s efforts to reduce extreme weather impacts through improved access to NOAA’s data and improved partnerships with the country’s weather industry.

“The NOAA Big Data Project, which is the first public-private partnership of its kind in the U.S. government, will help accelerate new areas of scientific and economic growth,” Jacobs said.

DISCOVER: Learn more about CDW’s analytics services and solutions.

NOAA Applies Machine Learning to Forecasting

It didn’t take long for this public-private partnership to bear fruit. Google recently released a white paper, “Machine Learning for Precipitation Nowcasting from Radar Images,” that sheds light on the research the search giant has been doing around weather forecasting. Specifically, Google is making use of the NOAA data set and applying machine learning models to do precipitation forecasting.

The model being developed is called “precipitation nowcasting.” It can make rain forecasts that project within a 0- to 6-hour time frame at a 1-kilometer resolution with a total latency of five to 10 minutes. In simpler terms, precipitation nowcasting can provide a short-term, localized rain forecast in the time it takes to slip on your boots and raincoat and grab your umbrella before heading out the door.

MORE FROM FEDTECH: Follow the 5 R’s of rationalization for an effective cloud migration.

Google’s Nowcasting Model Matches Up

Compare this model to NOAA’s current approach to one- to 10-day global forecasts, which involves processing about 100 terabytes of remote sensing data. This much data hits a wall when it comes to computational resources. Spatial resolution must be limited to only 5 kilometers, so there is a lack of localized focus in the forecast. Only three to four data runs can be processed per day, meaning that the results being shared with users are already six hours old.

When matched up against traditional forecasting tools such as the High-Resolution Rapid Refresh (HRRR) model, the optical flow model and the persistence model, Google’s nowcasting outperforms all three in short-term forecasting. Eventually, HRRR does better than nowcasting when the prediction horizon (how far out in the future the forecast is projecting) starts to extend beyond six hours.

Meteorologists aren’t likely to abandon the more traditional models anytime soon. But nowcasting could definitely fill a niche that the other models don’t do well, providing more accurate, localized forecasts in nearly real time. For citizens and federal agencies out in the field contending with extreme weather events, a tool like Google’s nowcasting couldn’t have come at a better time.