Cloud-Based Tools Ease the Load for NASA, NOAA Websites

On Aug. 21, 2017, as a solar eclipse cast a slice of darkness across the continental U.S., NASA captured the event for millions who couldn’t make it to the narrow path of totality.

The space agency launched a website dedicated to the rare celestial event and hosted multiple livestreams on NASA TV. Roughly 12 million people watched video on NASA sites that day, while another 38 million watched the streams on YouTube, Facebook and other media sites, says Brian Dunbar, internet services manager for NASA.

But even as the sky went dark, NASA’s sites did not, thanks to careful planning plus cloud-based hosting and content delivery networks.

Speed is one of the most important aspects of building a government website, which is why agencies turn to cloud providers to improve performance. Slow page loads can detract from an agency’s mission and turn citizens away.

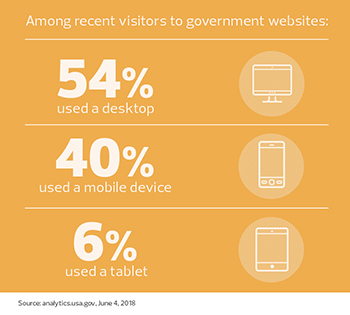

A 2016 Google study found that a little more than half of individuals will abandon a mobile website if it takes more than three seconds to load.

“After a period of time, folks will start tuning out,” says Alan McQuinn, senior research analyst for the Information Technology and Innovation Foundation. “And that’s important, because a website is the face of the agency for consumers.”

SIGN UP: Get more news from the FedTech newsletter in your inbox every two weeks!

NASA Used Past Experience to Plan for Website Traffic Spikes

NASA has been dealing with web traffic surges since the mid-1990s, when an eager public flooded NASA.gov to view photos from Mars Pathfinder, says Dunbar. Back then, the agency’s website lived on two servers in the basement of its Washington, D.C., headquarters.

On Jan. 31, 2003, it moved to its first commercial hosting service. Just a few hours after the transition was completed, the space shuttle Columbia disintegrated upon re-entry, and stunned citizens flocked to NASA’s website to learn more about the disaster and the seven astronauts who died.

“So many people came to our site looking for information that we burned through a year’s worth of bandwidth in three days,” says Dunbar.

By the time of the 2017 eclipse, NASA had contracted with cloud providers and content delivery networks to handle the deluge. CDNs cache site content at different points of presence around the country in order to deliver it more quickly and reliably to nearby users.

“The good news is that we knew when the eclipse was coming, so we started planning a year out,” says Ian Sturken, NASA’s web and cloud services manager. “We started doing tabletop exercises to make sure we could handle the load, and it worked just peachy. The amount of content delivered was astronomical, but there was no failure.”

Cloud Gives Agencies Flexibility to Meet Demand

Though total solar eclipses are rare in the United States, more common events like launches and NASA press conferences can cause normal traffic to spike by a factor of 10, says Sturken. The key to success is the ability of cloud-based services to scale and meet rapid increases in demand.

“The problem with hosting in your own data center is that your assets are limited,” he says. “You need to buy at the top end of what you think you’re going to consume. You don’t have to do that in the cloud. You can start on a low note and make it larger as you go.”

A huge part of NASA’s mission is sharing information, adds Dunbar. Having a web presence that scales with public demand is essential.

“We’ve always taken very seriously the clause in our enabling legislation that requires us to disseminate information about our programs to the widest extent practicable,” Dunbar says. “In the ’90s it was figuring out how to use the World Wide Web. Now our social media teams are all over the place, engaging people in a conversation about NASA. It’s something we take great pride in.”

FEC Uses Cloud Solutions to Save Money

If there’s anything more predictable than a solar eclipse, it’s an election. That’s why every three months, Federal Election Commission officials brace for an influx of visitors to their website.

“Our website traffic is cyclical, centering on our campaign finance filing deadlines, election years and election dates,” says Christian Hilland, deputy press officer for the agency. “Therefore, we have the good fortune of being able to plan for spikes in visits to fec.gov.”

The biggest traffic loads hit during quarterly deadlines, when campaigns are required to make public the amount and sources of funding they’ve received. During the year-end filing period in January, page views hit nearly 260,000 over a two-day period — more than five times the typical traffic, says Hilland.

The number of political action committees and candidates has grown steadily over the years, as has the amount of money each candidate has raised, says Hilland. That results in ever larger data sets that need to be downloaded as users request them.

“The commission’s goal is to make filings available through the website within seconds after a report is received electronically,” he says. “That can be particularly challenging when millions of records are received in a single day.”

In the past, commission IT staff needed to predict web traffic several years in advance, and then buy powerful servers that could handle the high-traffic periods but would remain underutilized at other times. Using cloud.gov, a custom platform created for the federal government, saves the agency an estimated $1.2 million annually.

“Since migrating to a cloud system, we no longer need to buy or allocate servers to handle an influx of traffic,” says Hilland.

NOAA Uses the Cloud to Handle Surges During Storm Season

Unlike astronomical events or campaign finance reporting, the weather is both more unpredictable and more important to people’s day-to-day lives. The National Oceanic and Atmospheric Administration operates some of the most heavily visited sites in government, such as weather.gov for the National Weather Service and hurricanes.gov for the National Hurricane Center.

Last fall, as hurricanes Irma and Maria swirled over the southeastern United States, hurricanes.gov received over 1 billion hits in one day, says Cameron Shelton, director of the Services Delivery Division in the Office of the CIO at NOAA.

“Last year’s traffic came as a surprise,” says Shelton. “Luckily, we were prepared. But it’s not unusual to have several million hits in a day, and several hundred million for a large hurricane.”

All told, the site generated more than 500 million page views during that period; Irma alone accounted for three times the amount of web traffic ever produced by a single storm.

Like NASA, NOAA used to maintain public-facing sites in-house. Last year, anticpating people checking the weather for the eclipse, NOAA contracted with a content delivery network.

“We still have our web farms, which are similar to CDNs,” Shelton says. “But we’re using the cloud to deal with surges rather than invest in hardware.”