More Data Means More Capability for Agencies

Data initiatives such as those at the FDA and Sandia National Laboratories, one of three U.S. nuclear weapons stockpile maintenance agencies, are built on needs as well as improved capabilities.

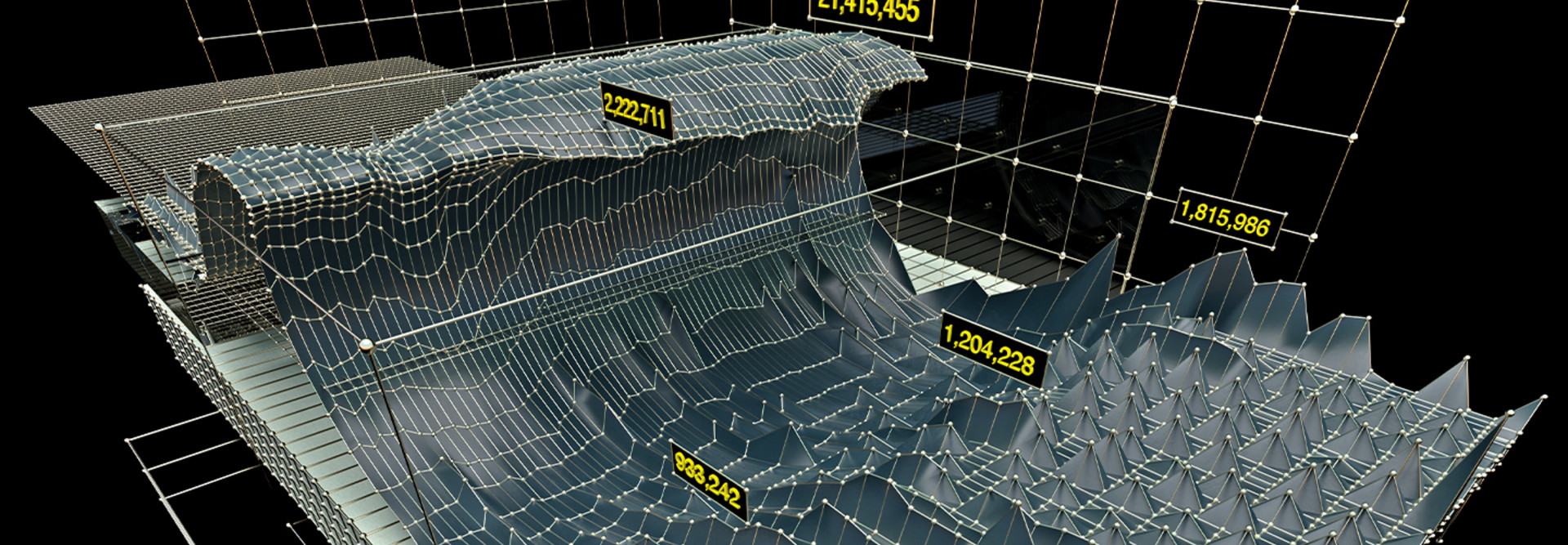

“We are producing so much data,” says Vince Urias, cybersecurity research scientist and distinguished member of the technical staff at Sandia. “The advent of cheaper storage and better indexing, and the performance of Splunk and other emerging tools, have led to our ability to ask questions about fairly large data sets very quickly, which allows us to store and pivot off of data at a rate that I don’t think has been possible in the last 10 years.”

Business analytics, machine learning tools and artificial intelligence are not new, points out Laura DiDio, principal of ITIC, a technology research firm. Organizations have been using them for years to collect data. But only 30 to 40 percent of them were actually analyzing that data to make it actionable.

That’s changed under COVID. “Now there’s a compelling need to not only collect the data but also use it to streamline operations and take meaningful action right away,” DiDio says.

RELATED: Find out how agencies can optimize data intake at the network edge.

Data Drives Action at the FDA

DMAP, which builds upon FDA’s 2019 Technology Modernization Action Plan, was announced this year, but many driver projects were already in place.

For instance, in spring 2019, the FDA began piloting an artificial intelligence capability to screen imported seafood. The initiative uses machine learning to analyze decades of data about past shipments so that it can more quickly identify which shipments can move faster through clearance and which should receive extra scrutiny.